Your brain is not a computer

Tim B. Z.

In my first job out of college, I worked as a lab manager for a psychologist. This was part of a passionate, if overly complicated plan to attend graduate school, which I might have followed through on had life not intervened first. I am often reminded of this experience when a topic in psychology crosses over into the tech industry.

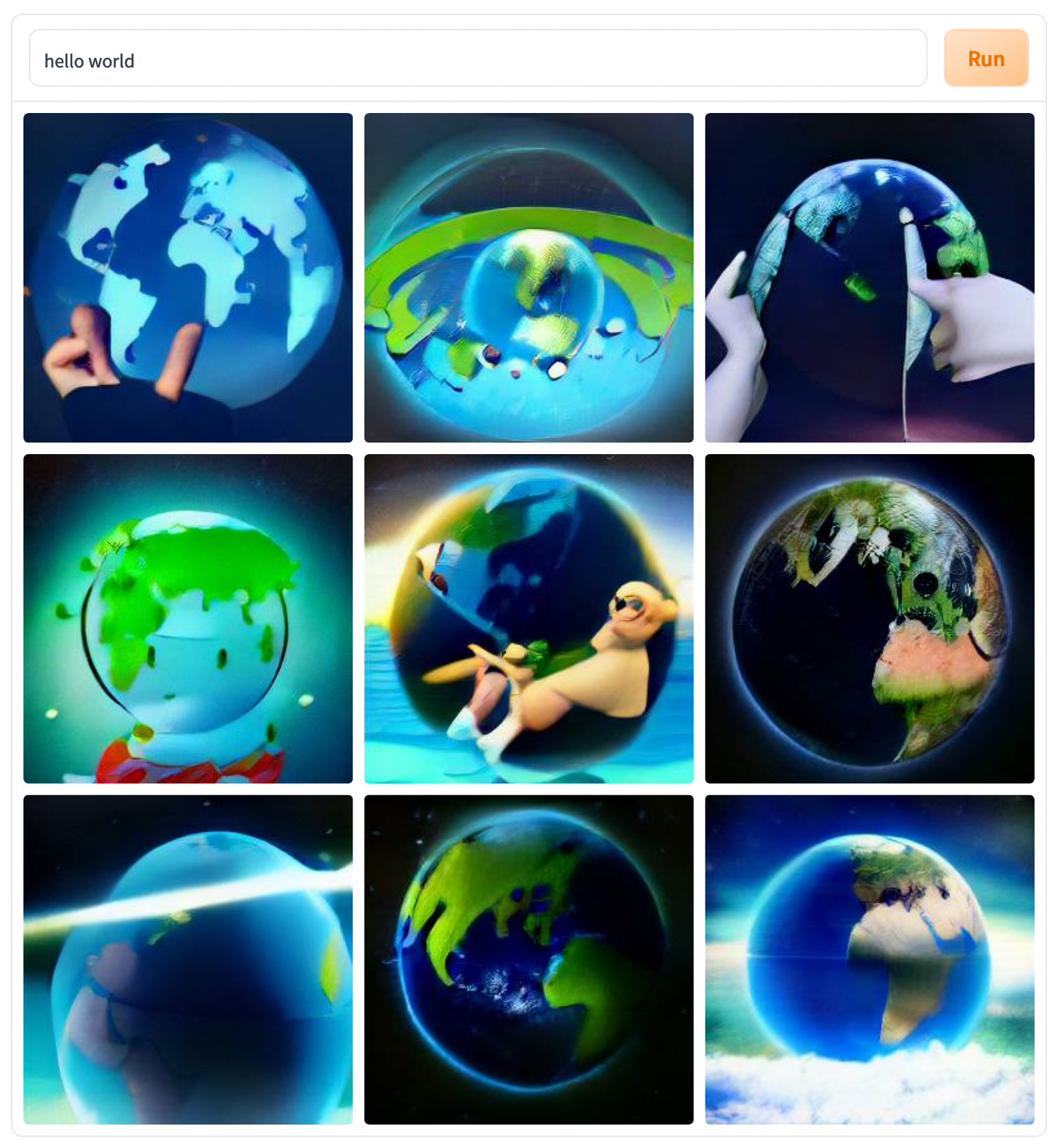

Right now, that topic is artificial intelligence. The Internet is debating the sentience of Google's LaMBDA and drinking from the firehose of images created by a bootstrapped DALL-E. Reading armchair debates about the nature of AI, I can't help but notice the widespread assumption that it is possible to engineer a human mind using the principles of computer science. Essentially, many people believe their brains work like computers.

This is not true. This metaphor makes sense at first, but only because it amplifies other popular misconceptions about the brain.

The Computational Mind Theory

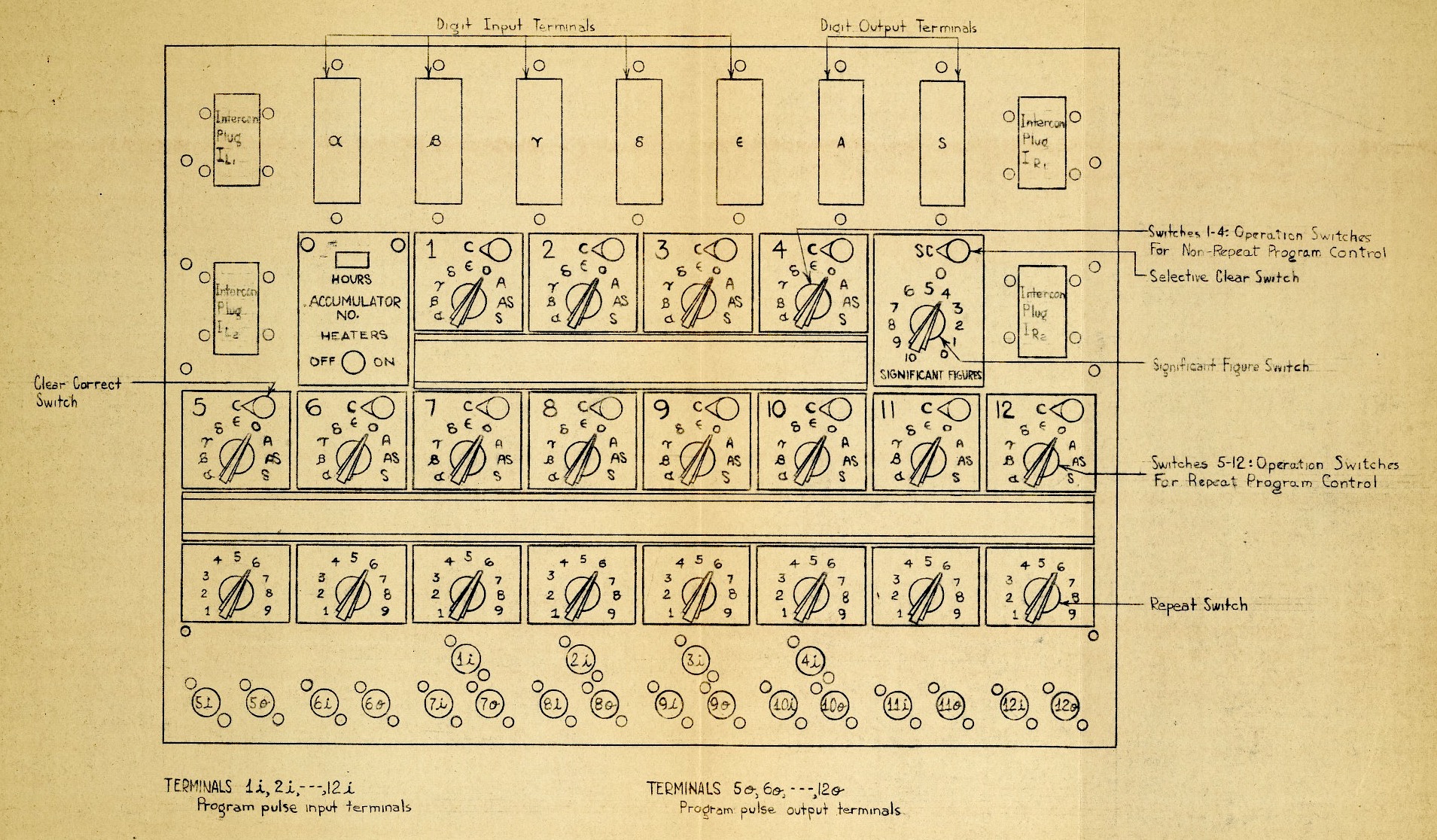

To start, the computational theory of mind is an old idea in the context of modern science. It first appeared in the scientific writings of Warren McCulloch and Walter Pitts in 1943, and it was developed by the philosopher Hilary Putnam in 1967. Accelerated by the pace of computer technology in the mid-20th century, the theory caught on with psychologists, philosophers, and a new discipline called cognitive science.

At its core, this is a theory of information. It goes something like this: the brain receives sensory input from the world, and through its own biology, performs algorithms that produce mental and behavioral output. Computer scientists also use input-output frameworks that map neatly onto these assumptions, so it's not uncommon to hear a person compared to a Turing machine, a.k.a., a computer. In this theory, the "software" of the mind runs on the "hardware" of the brain.

Through this lens, the human mind is an engineering problem that we can break into rule systems and a continuous training process. Consciousness becomes a computational exercise limited by algorithmic sophistication, processing power, and time. That is artificial intelligence.

This raises provocative questions about human nature, too. What is your mother but a collection of rules that reliably produce the emotions and behaviors we call her "personality"? If a machine had all of those rules, could we distinguish it from her?

The Problems

This premise sounds good to an engineer because it is engineering. It invokes several computer science concepts, leading us to assume that the brain:

- Runs a program of rules on a system that performs the work

- Stores and retrieves data in state

- Functions in components that handle information discretely

But each of these concepts are contradicted by experimental evidence. By discussing each, we learn more about how different your brain really is from a computer.

1. You are not an OS

First, let's address the assumption that a person's mind is a piece of software running on the hardware of their brain.

By trying to explain the relationship between your mind and brain, the computational theory implies that the mind and brain are fundamentally different phenomena. This idea is called mind-body dualism, and it has taken many forms over many centuries.

If we accept this premise, then it is not a stretch to assume that the mind can exist apart from the brain. In many religions, this maps to the concept of the soul, or essence of a person, that persists after death. In an unexpected echo, a computing approach might define the mind as a set of defined processes (a program) that can run on another device. And this is cinematic AI we know, isn't it? Discrete, consistent, portable... It is funny to notice the similarities of these claims. The key difference is that, to an engineer, the essence of a person can be described physically rather than metaphysically.

This is inaccurate for several reasons. First, the mind and the brain are (surprisingly) the same thing. We know this because electrical activity in the brain often happens before conscious thought. In other words, the brain doesn't just react to instructions, like we expect hardware to do. Likewise, when we learn things, our repeated decisions can alter neural structures that in turn affect our behavior and cognition. Our mental lives are the product of our brain functioning.

So there are flaws in framing the mind solely as a software project. If a mind is just a program, then we would eventually be able to develop that program. That is just a problem limited by time and computational power. However, because the mind is an emergent property of the brain, it is an embodied phenomenon, we will never fully reproduce it without also considering that physical system as well. Engineers are programming software using representations of neural networks and not neural networks themselves.

This doesn't mean an artificial brain is science fiction. In fact, initiatives like the human connectome project may give us the roadmap we need to deepen our descriptions of the brain itself. What we do know is that reproducing a human mind could be more like programming an embedded system than programming a computer. We first need to know what machine we are using.

AI programs often impress us by solving complex problems, and sometimes they can say and do things that convince us of their self-awareness, but they lack the fundamental physicality of our minds.

2. You are not a database

When we are born, we have a starter pack of sorts.

These skills include our reflexes, five senses, and ability to learn. This makes newborns highly adaptable the moment they enter the world. We know that even though babies have reduced vision, they know how to identify their mother's face. Through experiments, we also know that babies have surprising reflexes: they can grasp objects placed in their hand, they turn towards stimuli that touch their face, they will suck on objects in their mouth to facilitate breastfeeding. From there, all of the thoughts and behaviors that eventually become "us" develop through interaction and learning.

Then there are the things we don't have: data, rules, knowledge, algorithms, models, processors, subroutines, controllers, or symbols. These components allow computers to perform tasks, but we never develop them.

Most importantly, we don't store information as symbolic representations. A psychologist will never discover a copy of your favorite song in your brain in the same way we could locate a file on a server at Spotify. They won't discover any images, words, or scenes from your life, either.

In this way, our brains are not computers. Computers process information encoded into formats that they can use, such as binary bits grouped into increasingly complex bytes. These patterns can stand for things like words, images, and videos. Ultimately, computers move these representations around in physical storage on their components. To retrieve a specific representation, a computer literally locates it on a chip. Computers can also do things like copy, manipulate, and transform these representations, but even the rules they use to do so are localized within themselves.

This is the Physical Symbol System Hypothesis. Newell and Simon concluded that we can manipulate strings of bits to represent anything as a symbol on a digital computer, and arrange those symbols in systems that infer information about their relationships. To them, these were grounds for a "general intelligence". This sounds a lot like Descartes, too, who believed all knowledge consists of representations that can be atomized and reconstituted from basic building blocks.

The problem with applying this to the human mind is that symbols are supposed to have general applications. The symbol "0" can be used across processes like addition and subtraction just as easily as it can be used to represent a character in a cipher. But the encodings in our brain don't have this same flexibility.

Human memory is not a storage process. If it was, we could repeat a conversation we had yesterday, verbatim, without any mistakes. We could draw every detail of the Taj Mahal after seeing it in a dream. If we take this to the extreme, we would live each moment totally overwhelmed by the immense detail of our perfect memories. Eventually, like a computer, we would run out of storage.

When we remember something, we activate areas of our brain that were changed by observation and experience. This superficially resembles a computer, but it is much more mysterious. Psychologists have found that memories do not correspond to particular neurons, so there are no physical representations within us that we can isolate and extract to "read minds". Rather, memories seem to correspond to the syncopated activation of many neurons across the brain.

A memory is evoked rather than retrieved. To sing a song "from memory", we draw upon connections that were made through practice. That recall is often imperfect. Also, unlike a computer, our recall is circumstantial. We may be able to sing this song in a car, but not on a stage.

Psychologists still know relatively little. Even so, our current knowledge suggests that human memory differs from computer memory.

3. You are more than the sum of your parts

Finally, and perhaps most significantly, the brain can't be cleanly divided into functional pieces. Computers are made of discrete parts that each perform tasks in a system, but our brains often defy neat, localized categories. An engineer might call our system leaky.

Established ideas in psychology about localization, such as strict regions for memory and speech, have been undone by recent studies showing that the brain can adapt to life-changing damage. In neuroscience, this is called plasticity. For example, areas of the brain usually associated with vision can be repurposed for language processing in blind individuals reading braille. If we can't reduce brain functions into parts, we drift even further from computational theory.

Our minds emerge from billions of neurons interacting at a given time. This is not unlike how a nation emerges from millions of human relationships. A single relationship can be described, but this knowledge does not necessarily help us predict the outcome of political elections. We can only do that by understanding the emergent properties of the system.

In another example, we can compare brains to economies. A single business exchange is easy to analyze, but the economy itself contains more characteristics than simply the sum total of all business exchanges. Economies, like minds, are notoriously fussy when we try to reduce them to mathematical models. If we could do this, hedge funds would not spend so much money on PhD-level mathematicians, and we would be able to predict the stock market.

Where's your head at?

We must understand that, with AI, we are not building a human mind. The mind is not software. It is a phenomenon that occurs as a characteristic of our physical brain. Thus, creating a human-like AI isn't a matter of algorithmic complexity or processing power. Software engineers can use this clarity to become better stewards of this powerful technology.

It seems to me that, among software engineers, the brain-computer metaphor stems from two seemingly compatible premises. On one hand, it appears that all computers are capable of behaving intelligently. On the other, all computers are information processors. If taken together, we may believe that intelligence itself is an information processor. But as we touched on, the brain is much more.

Metaphors enable us to make unexpected connections. They can also constrain our thinking when left unexamined. The computational theory of mind initially expanded our understanding of the brain, even though it has been thoroughly debunked. By turning away from this old theory, we face the incredible mystery of our own nature.